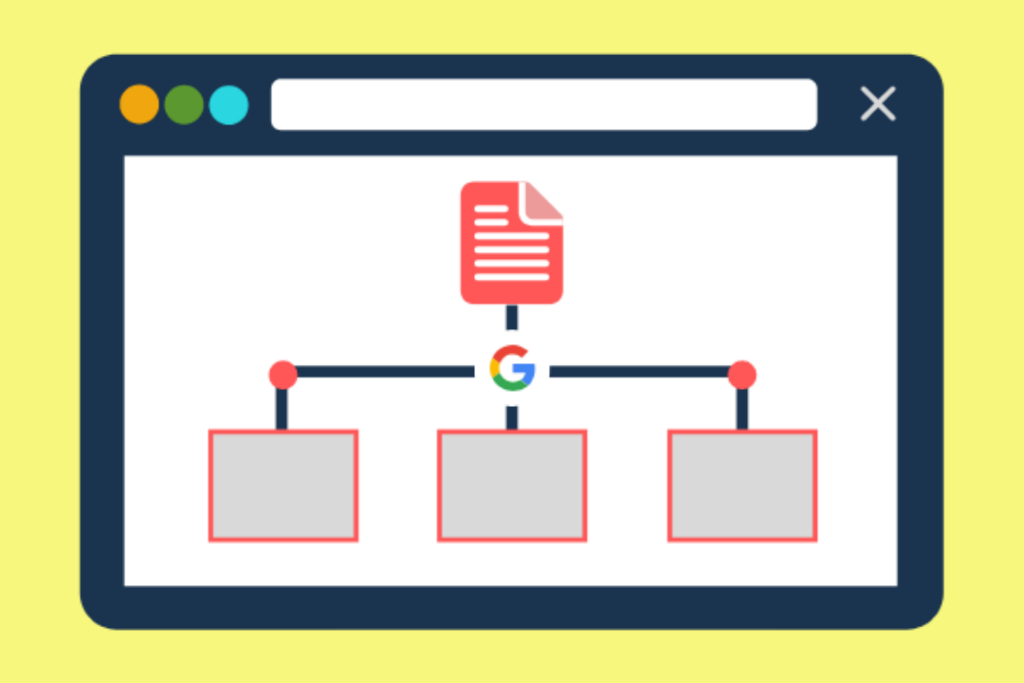

It is an XML file that contains URLs of all the pages of your site.

A sitemap is an XML file that contains URLs of all the pages of your site. It is used by web crawlers to crawl websites more efficiently and also to know about new pages as soon as they are added. It makes it easy for Google and also other search engines to locate information on a website. XML gives a lot of flexibility in terms of what you can include about each page in the sitemap. That’s why it’s most popular than any other format. The most basic information that you can include about each page is:

- The URL for the page

- Last time when it was updated

- How often do you change this page? If a page changes more frequently you should update your sitemap more often too, so that search engines keep up with your changes. There are various ways by which we can create an XML document which includes encoding it by hand but as things become complex we need to have some tools to help us encode these files automatically or semi-automatically, like using WordPress plugins or using online sitemap generator tools.

Now you get the basic idea about the sitemap. Now we can look for some of the importance of sitemaps in SEO.

Sitemaps help Google bots to crawl your site easily and quickly.

Sitemaps are an important part of Search Engine Optimization. A sitemap is a list of all the important URLs on the website that you want to be indexed and crawled by Googlebot, or any search engine bot. The main reason for having a sitemap is that it helps Google bot to crawl your site easily and quickly. It also helps in indexing the pages on your website which matter most to you, and which you want people to know about.

Sitemaps also help Google to find new or fresh pages on your site.

This is where a sitemap comes in handy. It helps Google to find new pages on your site, and it informs the search engine about the new pages that you have on your site. Let’s say you just added a page to your site. If someone was to go directly to that page and bookmark it, then Google would find out about it after a couple of weeks or so. But if no one bookmarks the page or links to it, Google will probably never find out about the new page as long as you don’t submit either an updated XML sitemap or indicate any changes in GWT (Google Webmaster Tools).

A sitemap can have information regarding the last time your pages are updated, its title, URL, meta description, etc.

A sitemap can have information regarding the last time your pages are updated, its title, URL, meta description, etc. This helps search engines like Google to easily crawl through the website and provide proper indexing. In short, it makes your website more visible on the internet.

The more visible your website is on the internet, the greater will be its chances of getting noticed by a search engine. A sitemap is a great tool to make sure that all important pages of your site are crawled by a search engine spider bot instead of just one or two pages (which can happen in some cases).

Sitemaps are not required for Google to crawl and index your site. But it might help Google to discover new pages more quickly than otherwise.

To put it shortly, a sitemap is a list of pages that are accessible to your visitors on your website. Think of it like a map that you would use to find the exact location of something.

It would be really difficult to manage all the pages manually using some sort of database or a spreadsheet. So that’s where sitemaps come into play. They help Google crawl and index websites more effectively and efficiently.

Only the first 1000 URLs in your sitemap will be processed by search engines.

Be mindful of how many URLs you include in your sitemaps, as only the first 1000 will be processed by search engines. Only the first 1000 URLs in your sitemap will be processed by search engines. That means that if you have a sitemap with more than 1000 links, search engines will ignore any over this limit. If you have more than that number on your site, you should create multiple sitemaps to ensure all pages are included.

Make sure you keep a single sitemap per domain, this will make sure that duplicate URLs are not present in it.

Keep only one sitemap per domain. This will ensure those duplicate URLs are not present in the sitemap. If you submit multiple sitemaps to Google, it will ignore them and crawl only the first one. Additionally, make sure that your sitemap does not contain more than 1000 URLs as Google will crawl only the first 1000 URLs in your domain’s sitemap.

Sitemaps should be well-organized and reflect accurate information about your website pages. Whenever you make changes to your website or add new pages, remember to update your XML file accordingly so that search engines can access the updated information. Finally, keep a check on errors while submitting your sitemap to Search Console because a single error can cause Google to ignore your entire sitemap file!

If you want to block any page from search engines then you can make use of the robot’s txt file. Never use a sitemap for this purpose.

Robots.txt file is also used for SEO, but it is not only used for SEO purposes. This file has been designed to make the website more user-friendly. A sitemap provides data and details about your website, but robots.txt blocks a page from search engines. If you want to block any page from search engines then you can make use of the robot’s txt file. Never use a sitemap for this purpose.

Sitemaps are used to communicate with search engines about your site and help them improve their understanding of it for better indexing and ranking.

A sitemap is a file that contains information about the content and structure of your site. Sitemaps are useful for the following reasons:

- They help you determine which pages to include in your site’s index, and which should be excluded.

- They help Google understand which pages on your site are more important than others, so they can decide what to prioritize in their indexing process. This is especially important if you want certain pages on your website to rank well for certain keywords. If those pages aren’t indexed properly or don’t have enough internal links pointing towards them, then they won’t rank as well as they should be able to do.